AI

Intel’s Bleep AI monitors hate speech, but lets you toggle racism and LGBTQ+ hate if you’d like

No, we don’t know why they thought this was a good idea.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

For some unknown reason, Intel, the company known as one of the biggest computer chip producers in the world, has unveiled a new AI called Bleep in its GDC 2021 Showcase, complete with sliders and toggles to try and monitor and block out certain levels of hate speech in online gaming chats. Why the computer chip company felt they needed to break out into this industry is anyone’s guess.

So, let’s break this down a little bit. Intel has managed to develop itself into one of the top computer chip producers in the world, with its name on PCs and laptops everywhere. For whatever reason, the company has decided that its expertise in the computer chip field has led them to develop Bleep, an AI that aims to put a stop to one of the all-time known facts about the internet: some people are just assholes.

Let me shift gears a little bit here by saying I don’t think Intel is completely in the wrong here. I like the idea of a company stepping up and saying it doesn’t tolerate these kinds of things and is looking to do something about it. But Bleep just doesn’t look like the right answer to me.

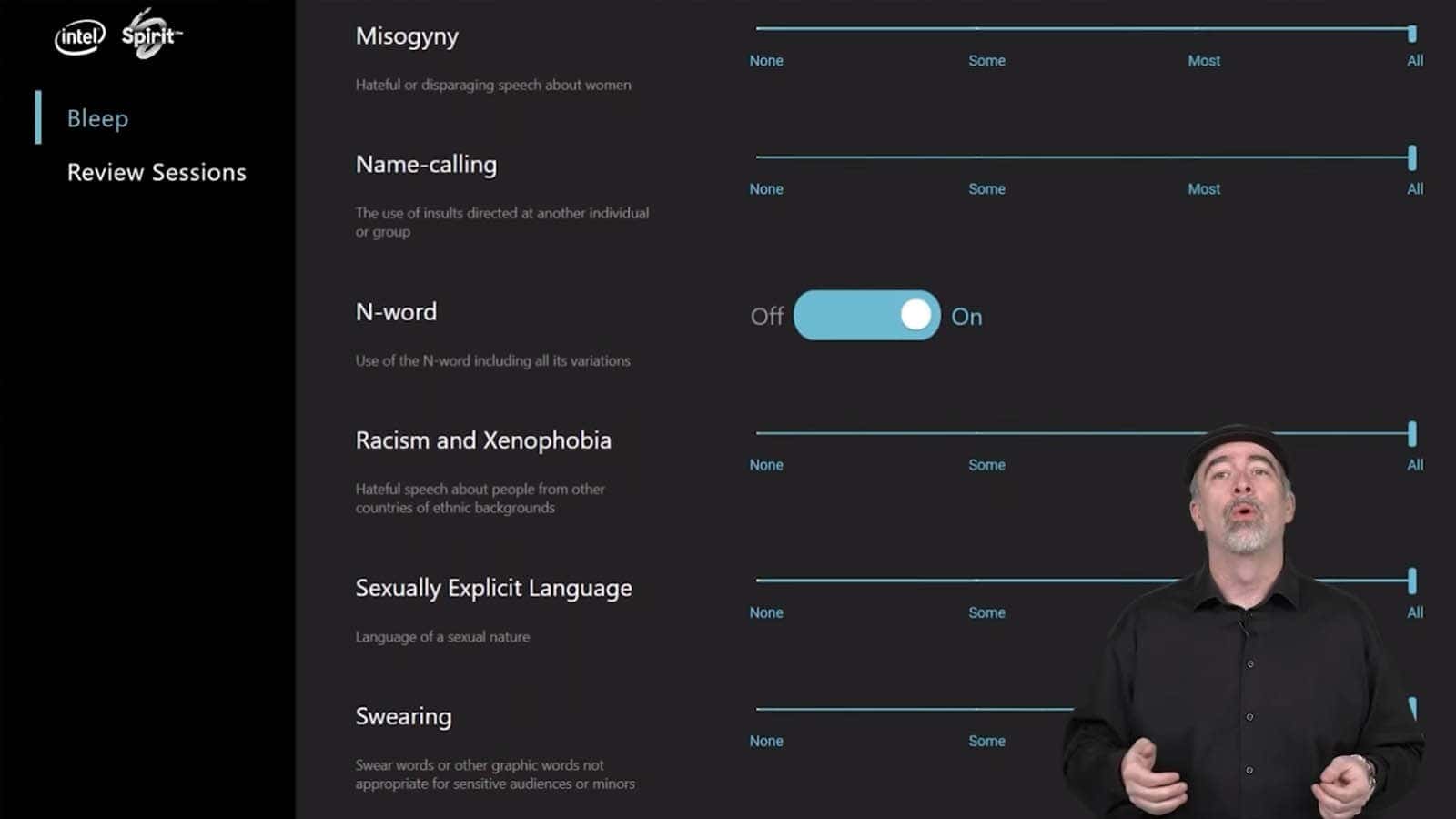

The main problem here is pretty obvious. The AI’s user interface is full of sliders and toggles that can be set up to allow certain amounts of hate speech to bypass the system’s blockers. This all just seems kind of outrageous to me. There are sliders for things like “Racism and Xenophobia” and “LGBTQ+ hate.” It seems to me that something like this should be geared towards blocking out hate speech completely and not letting users decide which hate speech to tolerate.

At a surface level, these things are great to take into consideration when looking to block hate speech. But with Bleep, there are sliders that a user can use to allow some or even all hate speech in any given area to pass through without harm. Maybe it’s just me, but it seems like hate speech is just hate speech.

The bottom line to me is people are assholes and hate speech is hate speech. The issue with an AI like this is that it can only be programmed to recognize certain words or phrases as negative. A system like this will never be able to get at the root of the complex context that comes along with some of the trigger words that the system is trained to hear. This means that while some words may be blocked, (which is still a good thing) the true meaning of assholes online is still going to come through.

Something like this is never going to replace the trusty mute key. Online chats, especially in video games, are full of nasty, sad people, and sometimes the best thing you can do is just mute, but I like to take a more proactive approach. More and more games these days are starting to let players know when a gamer they’ve reported has received disciplinary action, and there’s no better feeling than reporting some idiot for hate speech and then seeing later that they’ve been banned because of your actions.

While I do love the fact that Intel has identified this as a problem, Bleep doesn’t look like the answer. Blocking some, but not all, hate speech is kind of silly, and AI is going to have trouble getting to the root of the issue. But it is good to see companies beginning to address these issues.

Have any thoughts on this? Let us know down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- Intel has hired the ‘I’m a Mac’ guy to throw shade at Apple

- The Polish government wants to introduce a law that prevents tech companies from censoring people

- A small ISP in Idaho decides that the punishment for “censorship” is more censorship

- Google’s Magenta AI was used to write a “new” Nirvana song 30 years later