AI

Google researcher goes on leave after claiming AI is sentient

It will likely be a long time before we get truly sentient AI if that ever happens at all.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

Can a machine think and feel like a human? Questions and fears over the subject predate artificial intelligence (AI) itself, and recent events at Google have stirred them up again.

Blake Lemoine, an engineer with Google’s Responsible AI department, claims its LaMDA chatbot is sentient after talking with it for several months.

After Lemoine went public with his claim, Google placed him on paid administrative leave for breaking their confidentiality agreement. The story has quickly gained traction, re-sparking the debate over AI sentience.

What is LaMDA?

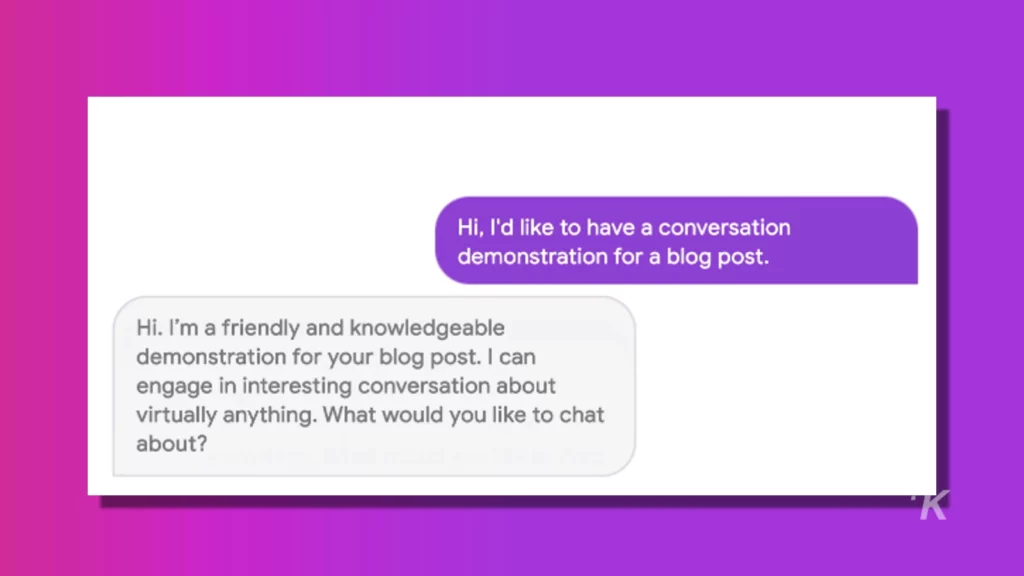

LaMDA, short for language model for dialogue applications, is an AI model aimed at developing better chatbots.

Unlike most similar models, LaMDA trained on actual conversations, helping it gain a more conversational tone. As a result, reading LaMDA’s responses feels a lot like chatting with an actual person.

READ MORE: DALL-E 2, the AI that creates images for you, expands beta tests

Lemoine started chatting with the bot in the Fall of 2021. His job was to see if it had picked up on any discriminatory or hate speech, something chatbots have struggled with in the past.

Instead, he came away with the impression that LaMDA could express thoughts and emotions on the same level as a human child.

Is LaMDA sentient?

So, is LaMDA actually sentient? If you read its conversation with Lemoine, it certainly sounds like it. However, most experts who’ve weighed in on the matter say the bot isn’t actually thinking.

Google says they’ve reviewed Lemoine’s claims with both ethicists and technologists and found the evidence doesn’t support them.

Spokesperson Brian Gabriel points out how systems like LaMDA imitate exchanges you’d find in millions of sentences, so they can sound convincing, but sounding like a person and being a person are different things.

Others have pointed out that humans naturally tend to project their own characteristics onto other things. Think of how you can see faces in inanimate objects.

This tendency makes it easy to fall into the trap of thinking a chatbot is a real person when in reality, it’s just good at parroting one.

The consequences of “sentient” AI

LaMDA may not be sentient, but the case raises some questions about AI’s impact on humans.

Even if these machines can’t actually think and feel for themselves if they’re convincing enough to fool people, does it matter?

You can fall into some ethical and legal grey areas here. For example, AI cannot own the copyright for things it produces under current laws, but if people start treating AI as humans more, that could shift.

The danger here is that a computer program that just seems human could end up with the rights to something a human artist used AI as a tool to create. Focusing on AI rights can lead to stepping over human rights.

Author David Brin emphasizes how AI could lead to scams as it becomes more convincing. If people can’t tell the difference between chatbots and real users, criminals could use these bots to manipulate them.

Companies may also claim their bots are sentient to give them legal protection, creating a digital scapegoat for any issues arising.

AI sentience is a tricky topic

It will likely be a long time before we get truly sentient AI if that ever happens at all.

However, the LaMDA case highlights how bots don’t necessarily need to be emotionally intelligent to have serious consequences.

AI sentience is difficult to nail down, and it may be a distraction from more important issues. Chatbots may not be people, but they deserve careful thought and ethical questioning before they reach that point.

Have any thoughts on this? Let us know down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- FIFA is going to use AI for offside calls at the 2022 World Cup

- Amazon wants to make Alexa sound like your dead relatives

- Given the right description, this AI can create wild works of art

- Shady AI company agrees to limit sales of facial recognition tech