AI

People have sympathy for robots when asked to kill them, according to new study

If only I could get the same level of respect.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

[*local news anchor voice*] “You’ve heard of people kicking robots, but killing them?”

Our history of interaction with voice-operated smart devices (and AI in general) has been a deeply troubled one, to put it lightly. Perhaps, as the Captain from Cool Hand Luke might’ve put it, we’ve simply had a failure to communicate. Perhaps it’s because we envy their all-knowing intelligence, or perhaps its because we’ve been fed enough “robots gone mad” media since birth to know that we should probably maintain a steady suspicion of our artificial future foes friends at the very least.

But while many of us will freely curse our Alexas and Siris without batting an eye, it turns out that we get a little more empathetic when asked to end the “life” of a “living” “breathing” robot.

In a recent experiment published by the Public Library of Science, 89 volunteers were met with the unique task of powering down a “pleading robot” (okay those last scary quotes aren’t facetious) after asking it a series of questions. The experiment was held under the false pretense of gaining insights into the robot’s interactive abilities, but wouldn’t you know it, actually revealed more about humanity…after all…

As The Verge reported:

In roughly half of experiments, the robot protested, telling participants it was afraid of the dark and even begging: “No! Please do not switch me off!” When this happened, the human volunteers were likely to refuse to turn the bot off. Of the 43 volunteers who heard Nao’s pleas, 13 refused. And the remaining 30 took, on average, twice as long to comply compared to those who did not not hear the desperate cries at all.

The experiment carried out by German researchers is only the latest to confirm the (at times troublesome) link between humans and their AI counterparts, which has been theorized as far back as 1966. In one such experiment, Oxford researchers concluded that people enjoyed interacting with a robot more “when the robot’s personality was complementary to their own” rather than similar. A 2012 study published by the Journal of Applied Social Psychology claimed that people’s interactions with robots were often biased from the start as a result of gender stereotyping.

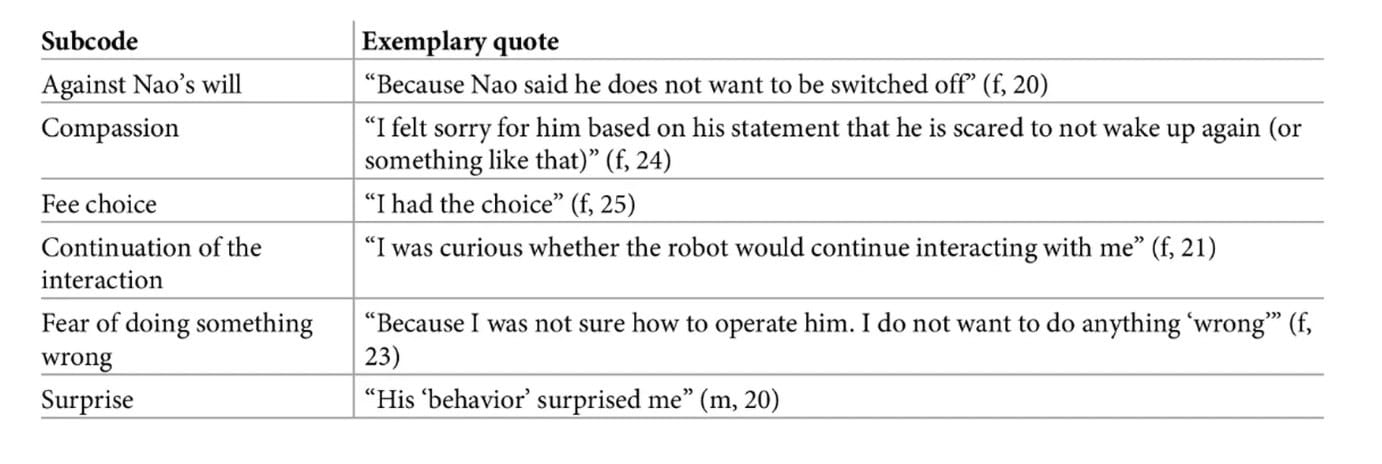

As to what kept the volunteers from pulling the plug on Nao? While the answers varied, researchers ultimately concluded that the robot showed “a sign of autonomy” to volunteers through its series of interactions, leading them to treat the robot “rather as a real person than a machine.” Funny, that’s not even something I’ve been able to accomplish with those closest to me.

What do you think about the study? Would you pull the plug? Would you? Let us know below.

For more tech news and opinions, check out:

- Siri may soon be able to recognize different voices, thanks to multi-user support

- Kevin made me write about the horse balls in Red Dead Redemption 2

- What David thinks about the state of social media