Apps

You can now prevent your online photos from being used by facial recognition systems

Screw you, I wasn’t even there.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

We live in a world which resembles the bastard lovechild of 1984 and The Man in the High Castle day-by-day. We are on the verge of Brave New World. Except we don’t have SOMA keeping us all in a state of utopian bliss. Instead, “they” just expect us to do as we’re told. They want us to follow blindly what we’re fed on social media. We don’t even manage to behave like the good little worker ants. Instead squabbling amongst ourselves.

However, the revolution is here, my friends. It is time to fight back against our evil oppressors and yell “ENOUGH.”

Or perhaps we could just mess things up a bit and fool some facial recognition software. Yeah, that’ll show ’em. Well, actually, it will. And the geniuses over at the University of Chicago SAND Lab have been busy developing such a plan. That being, a program – Fawkes – that has arrived to detonate a bunch of gunpowder right below Clearview AI‘s seat.

Big Brother is watching

Image: Unsplash

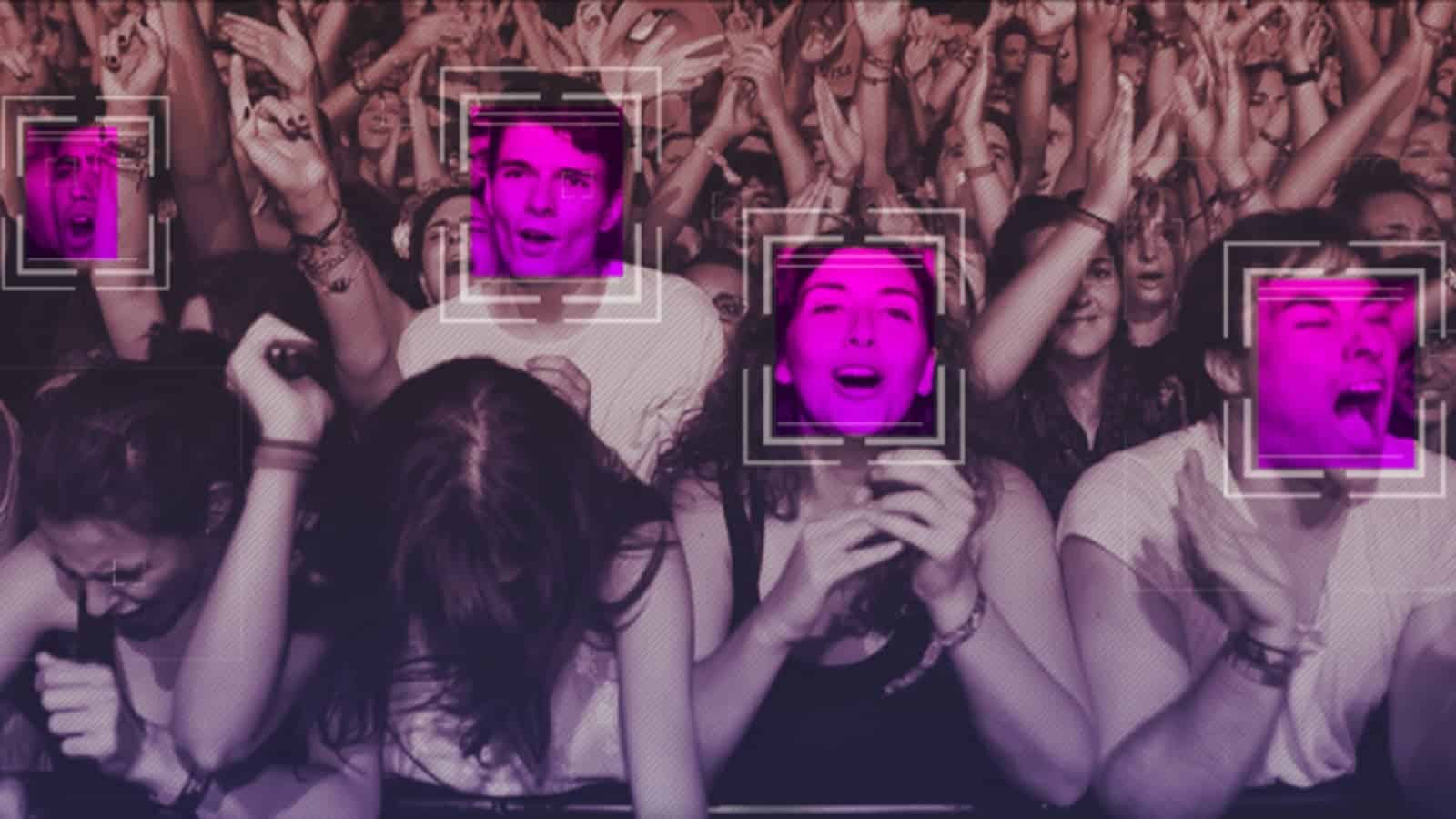

OK, so we all know that we are subjects of surveillance almost 24/7. When you walk down the street, cameras watch you. If you enter a store, cameras watch you. When you look at your smartphone, that’s right, cameras watch you. Everywhere we go, it seems, cameras are recording our every move. And, not only that but they’re stealing your face, too. Facial recognition can be used for a variety of things, from identifying you (obviously) to using your likeness in deepfake videos and applications. This is why researchers at the University of Chicago are so keen to stop the tidal wave of nefarious AI.

If this were to become a public-friendly app, as the developers intend (eventually), it could stop Clearview AI dead in its tracks. Which can only be a good thing, right? It is designed to be used with any ordinary photo. You would simply have to run the image through the app and you can then share it as you normally would.

How does Fawkes work?

Image: Ahmed Zayan on Unsplash

The Fawkes software works by changing the image very slightly. It isn’t enough for humans to be able to detect it. These changes are made at pixel-level and they are only very subtle. However, they are enough to be able to confuse facial recognition software. The researchers have termed this process “image cloaking” and it creates a different image, not that you can tell from looking.

They explain it like this: “The difference…is that if and when someone tries to use these photos to build a facial recognition model, “cloaked” images will teach the model a highly distorted version of what makes you look like you. The cloak effect is not easily detectable by humans or machines and will not cause errors in model training. However, when someone tries to identify you by presenting an unaltered, “uncloaked” image of you (e.g. a photo taken in public) to the model, the model will fail to recognize you.”

The future of AI facial recognition

So, it would seem we can continue our lives like the worker ants that we barely are. Except now we don’t have to worry about some sort of horrific deepfake crisis arriving at our door. Well, we can worry a bit less, anyway.

Good on the researchers at the University of Chicago SAND Labs. This is a step forward in the fight back against our liberties disappearing completely. I wonder if there is any way they can stop Elon Musk from playing fucking U2 directly into my cerebral cortex, though?

What do you think? Are you worried about facial recognition? Let us know down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- Amazon is banning police from using its facial recognition tech for one measly year

- IBM ditches facial recognition tech citing racial justice reform

- France is trialing facial recognition tech that checks if people are wearing face masks

- A facial recognition company scraped billion of photos from sites like Facebook and YouTube