Facebook will now let you know if a page constantly shares fake news

Will it really make a difference though?

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

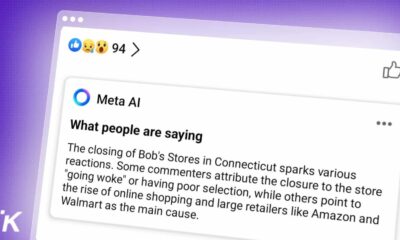

Since the last election cycle, Facebook has been taking steps to alert users of posts that contain provably false information. Now, the company has released a new blog post detailing new steps it is taking to discourage people from engaging with pages that have a habit of sharing fake news.

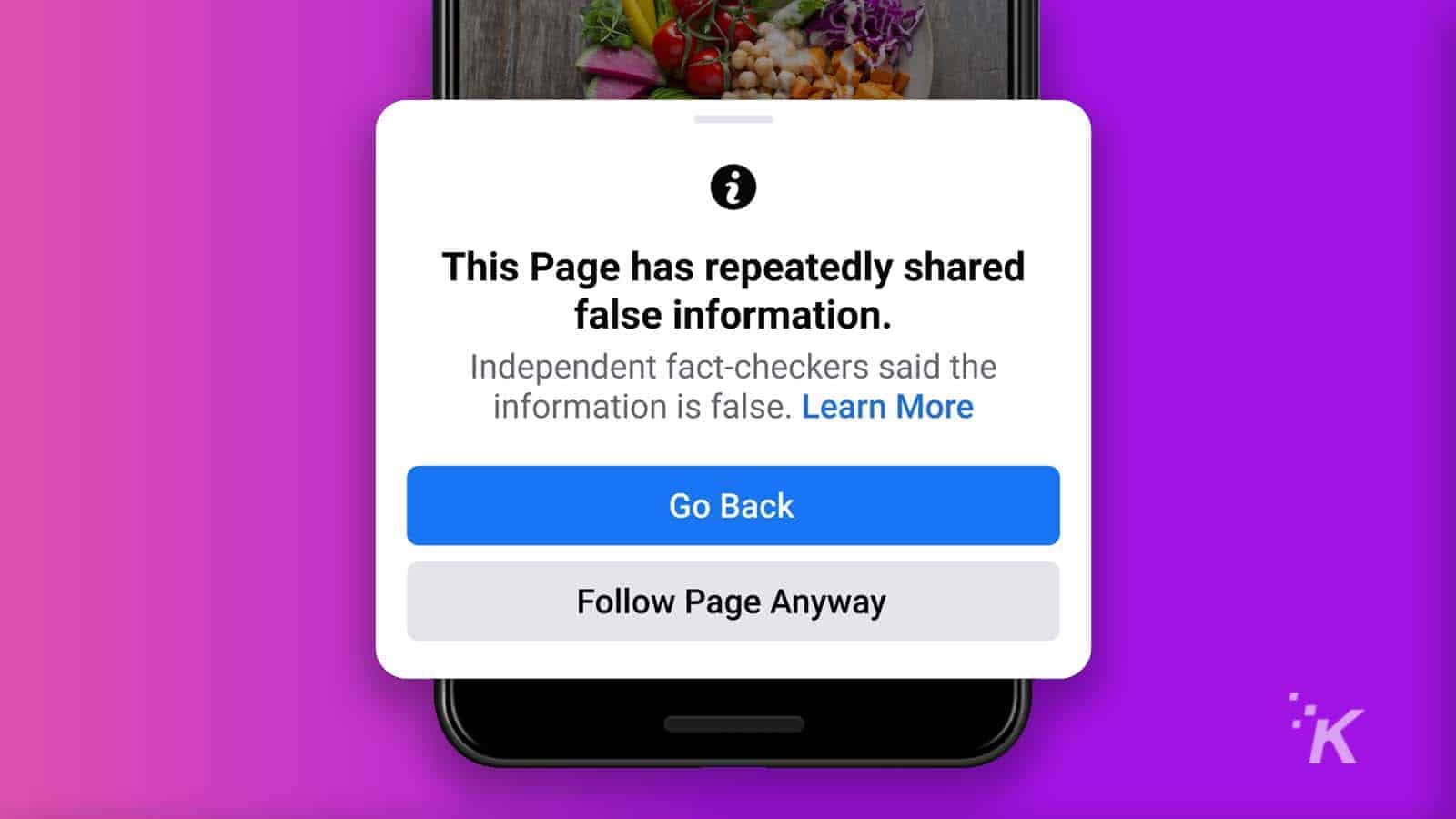

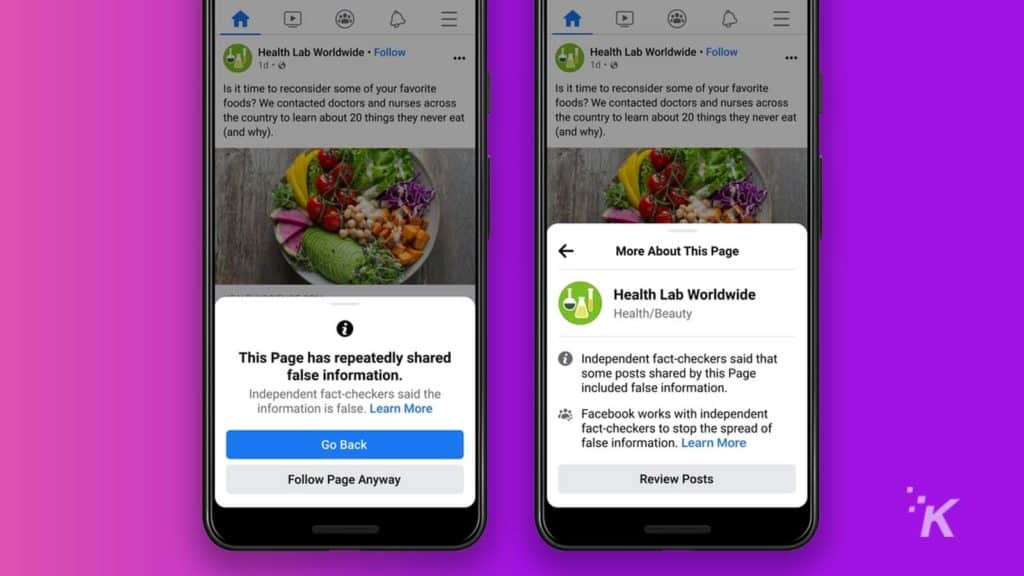

Essentially, Facebook will now alert people that like a page that the page “has repeatedly shared false information.” You will then have the option to turn back and not like the page, or like it anyway.

In addition, users will be able to click on “learn more” links that point to verified sources, as well as one that provides more information into Facebook’s fact-checking program.

That’s not all Facebook is doing, however. The company will also start showing less from individuals that have a history of sharing links containing false information. Previously, this was limited to pages and groups, but now that is expanding.

Finally, the company is redesigning its notifications regarding posts that contain false information. Facebook is making information clearer and will give people the option to share the fact-checked article instead.

Overall, it’s good to see Facebook making these changes, but at the end of the day, fake news still runs rampant on the platform, so we’ll have to see if this actually makes much of a difference.

Have any thoughts on this? Let us know down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- Facebook is still tracking your iPhone – here’s how to stop it

- Facebook is teasing pay-per-view sports events on the platform

- Twitter Spaces has now expanded to desktop

- Instagram may soon allow users to make posts from web browsers