Facebook deletes seven million posts spreading false Covid-19 information

Also deleted, 22.5 million hate speech posts.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

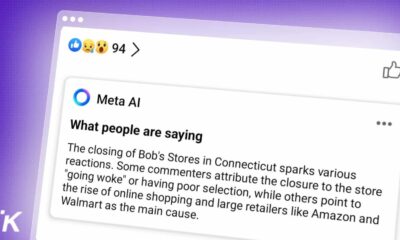

This week, Facebook announced that it deleted seven million posts for sharing false news and information related to the coronavirus. Deleted posts included content that spread false news of Covid-19 cures and incorrect preventive measures.

The data was released as part of their Community Standards Enforcement Report, the company’s sixth report since 2018. Facebook introduced this as a result of the many accusations it received over the handling of hateful content spread on its platform.

By far the biggest social network, Facebook stated that it plans to receive proposals from experts to inspect the metrics used in the Community Standards Enforcement Report.

Thanks to improvements in detection technology, Facebook also managed to delete around 22.5 million hate speech posts, just in the second quarter. That’s a significant jump from the 9.6 million posts that were removed in the first quarter.

Furthermore, the company managed to remove around 8.7 million posts closely related to “terrorist” organizations. That’s also quite the jump from the 6.3 million posts removed in the first quarter. At the same time, the report stated that they had removed around 4 million pieces of various content spread by “organized hate groups.” Compared to the last quarter, that’s less material, considering that Facebook removed approximately 4.7 million pieces of content.

How have civil rights groups reacted to the presented data?

According to Facebook, the presented data speaks volumes about their dedication to the prevention of fake news, fake information, hate speech, and hateful content in general on their platform.

However, civil rights groups downplayed the data in their report, because they state Facebook wasn’t transparent enough about the prevalence of “hateful content” circulating on all of their platforms. Because of that, they said that the reports on the removal of hateful content are not as meaningful.

According to Facebook, efforts to deal with harmful content were hampered, as the company had fewer reviewers at its offices because of the current pandemic. Instead, it had to rely more on automation for reviewing potentially hateful content, and misinformation spread on their platform.

That reduced the number of actions against content associated with potential child sexual exploitation and self-harm, as explained by Facebook executives via a conference call and within the report.

What do you think? Is Facebook doing enough to stop false information related to Covid-19 and other issues? Let us know down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- Mark Zuckerberg doesn’t need a secret deal with Trump to operate within set parameters

- Facebook thinks I’m in a poly relationship with my partner and her mother

- Unlike Facebook, TikTok will reveal its algorithms in order to build trust in its policy enforcement

- Twitter has finally rolled out its new reply feature to help cut down on unwanted conversation