Business

Is Samsung faking your Moon photos?

Samsung has responded to recent Reddit posts alleging that their proprietary “Scene Optimizer” tech produces images that are not “entirely genuine.”

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

Quick Answer: No, but it calls into question a larger discussion of how A.I. and machine learning are used to enhance digital photography.

Better dig up Stanley Kubrick because we’ve got another conspiracy theory involving technology and the Moon.

Of course, this is nowhere near as juicy as the accusations that the famed director was covertly hired by the government in the late 60s to produce a Moon Landing TV special (at Area 51!) to help convince Americans the U.S. had won the space race.

Still, we got your attention, didn’t we?

This particular debate revolves around Samsung’s proprietary “Scene Optimizer” tech, which has been around since the Galaxy S10 series.

Here’s how it works

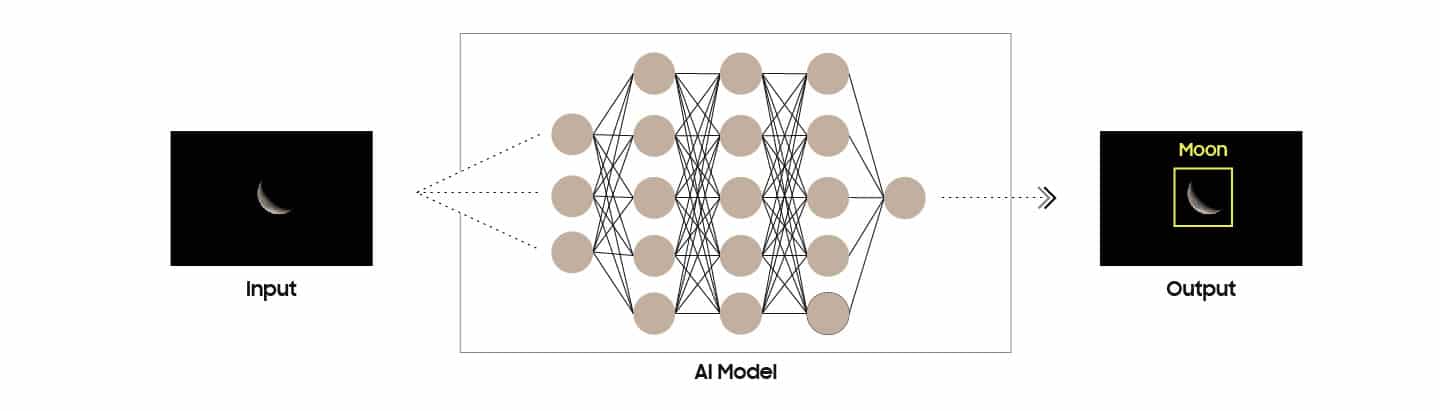

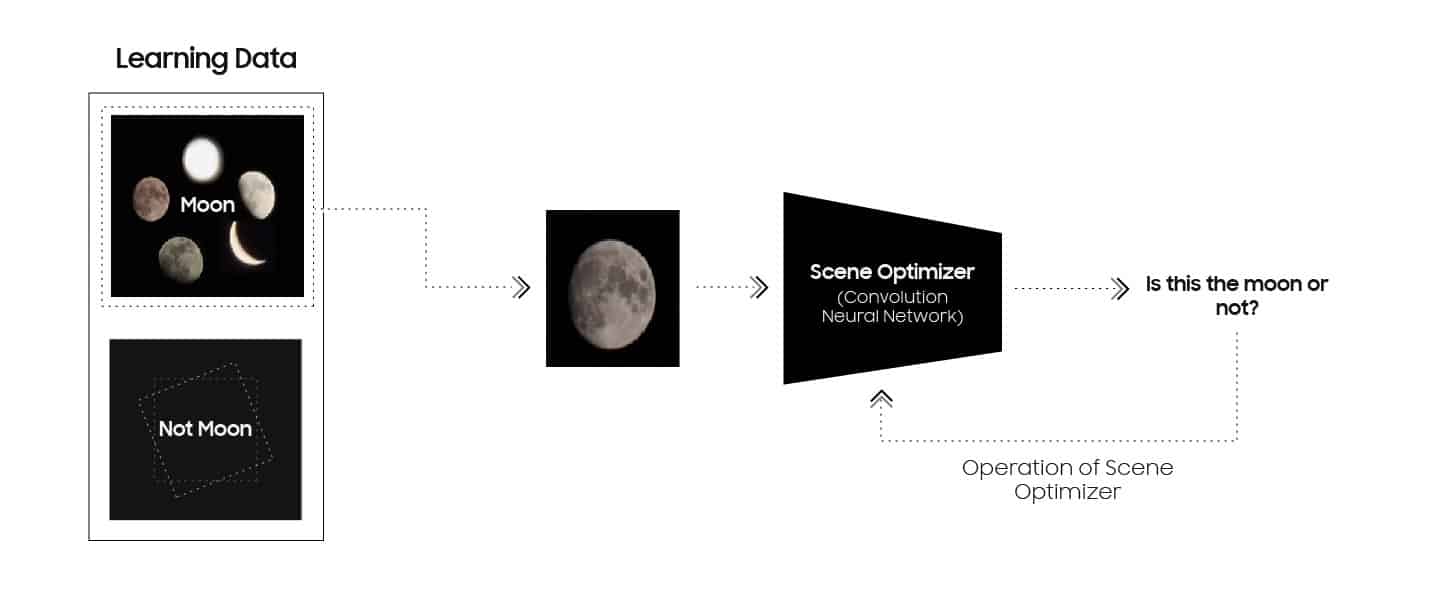

Scene Optimizer utilizes Machine Learning (an implementation of Artificial Intelligence) to help identify certain common photography situations, like “Food,” “Sunrise,” “Animals,” etc., and enhance specific aspects of those images.

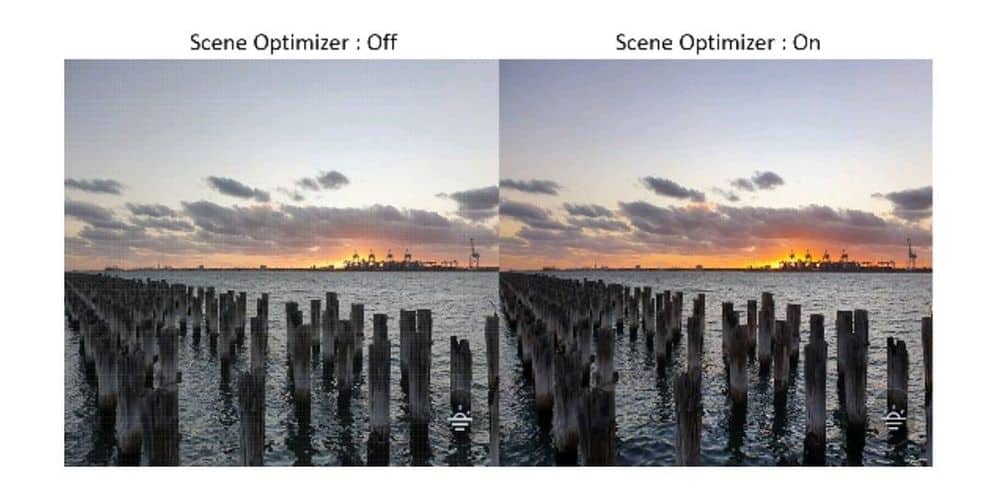

For instance, in the example below, Scene Optimizer deepened the hues of the sunset, amplified the definition on the clouds, and boosted the contrast around the water.

Since the Galaxy S21 series, Scene Optimizer has included a feature called Space Zoom, which allows amateur astrophotographers to use the camera’s innovative 100x zoom function to take stunningly clear photos of the moon.

Even Marques Brownlee was impressed by the tech.

Samsung went so far as to publish a blog post highlighting how Scene Optimizer with Space Zoom can help take photos of the 2021 Blood Moon Total Eclipse.

Since the launch of Space Zoom, there has been some debate in the digital photography world as to whether these A.I.-aided images are “cheating,” but Samsung generally shrugged these off.

This is where things get interesting

That brings us to March 10, 2023 when an intrepid Reddit detective named u/ibreakphotos conducted their own series of experiments.

It’s worth reading through all three posts, but in short, they took a stock image of the moon, shrunk it down to 170 x 170px, blurred it beyond recognition, and then took a photo of the image from across a darkened room.

What they found is that the software had interpreted a lot of detail that would’ve been (allegedly) impossible for an optical sensor to pick up – because there was no photographic data aside from the blurry, Moon-reminiscent image.

In their final post on the matter, u/ibreakphotos addressed some of the pushback they had received:

“Many people seem to believe that this is just some good AI-based sharpening, deconvolution, what have you, just like on all other subjects. Others believe that it’s a straight-out moon.png being slapped onto the moon and that if the moon were to gain a huge new crater tomorrow, the AI would replace it with the “old moon” which doesn’t have it. BOTH ARE WRONG. What is happening is that the computer vision module/AI recognizes the moon, you take the picture, and at this point a neural network trained on countless moon images fills in the details that were not available optically.” -via u/ibreakphotos

After initially publishing an explainer in Korean only, on March 15, Samsung responded by posting a blog called “How Samsung Galaxy Cameras Combine Super Resolution Technologies With AI Technology to Produce High-Quality Images of the Moon.”

This SEO-optimized press release rehashes their old defenses of the tech while laying out a few more specifics.

Samsung responds

To keep it short: when you go past 25x zoom on your Samsung Galaxy S21+’s camera while focusing on the moon, the sensor will quickly snap 10 or more images to create a quick average.

If the Artificial Intelligence detects “The Moon,” it will dig through its dataset (which was trained on hundreds of thousands of pictures of the moon) to fill in some of the blanks.

A secondary process analyzes the brightness of the photo to match the generated moon to the scene being recreated.

Does this really answer u/ibreakphotos critiques? Maybe…?

Artificial Intelligence has been a part of digital camera technology for a while now and it’s not going away any time soon (see also Google’s Magic Eraser tech from the Pixel 6.)

Generally, this seems to be part of a larger conversation in the digital photography world as A.I. implementations become more seamlessly integrated into regular user experiences.

In the world of magic (I know, I know, bear with me), they use the term “Too Perfect” to describe an element of a trick that is “so right” for the illusion that it will make a non-magician instantly figure out how the effect was performed.

Circling back to Marques Brownlee’s demo video, the way the sharp image of the Moon snaps into view certainly seems “Too Perfect,” but maybe we should just learn to sit back and enjoy the digital sleight-of-hand, right?

Ultimately, what Samsung is doing here with Space Zoom and images of the Moon is no different than when the Scene Optimizer tech makes green grass just a little bit greener.

What do you think of Samsung’s explanation? Let us know in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- Best Samsung Galaxy S23 deals: trade-in offers, discounts, and more

- Samsung’s AI can now clone your voice for text-to-speech responses

- Samsung defends Galaxy S23 Ultra screen defect as no big deal

- Review Roundup: Samsung Galaxy S23 Ultra – a minor upgrade

Chris

September 13, 2023 at 1:07 am

Explain how i can take a clear picture of a license plate from almost a mile away? Ive been taking moon pictures for 6 months until just today i found out jealous apple users made up a conspiracy…and the iphone 15 is a dud too