Airpods

Translation technology: Breaking down language barriers

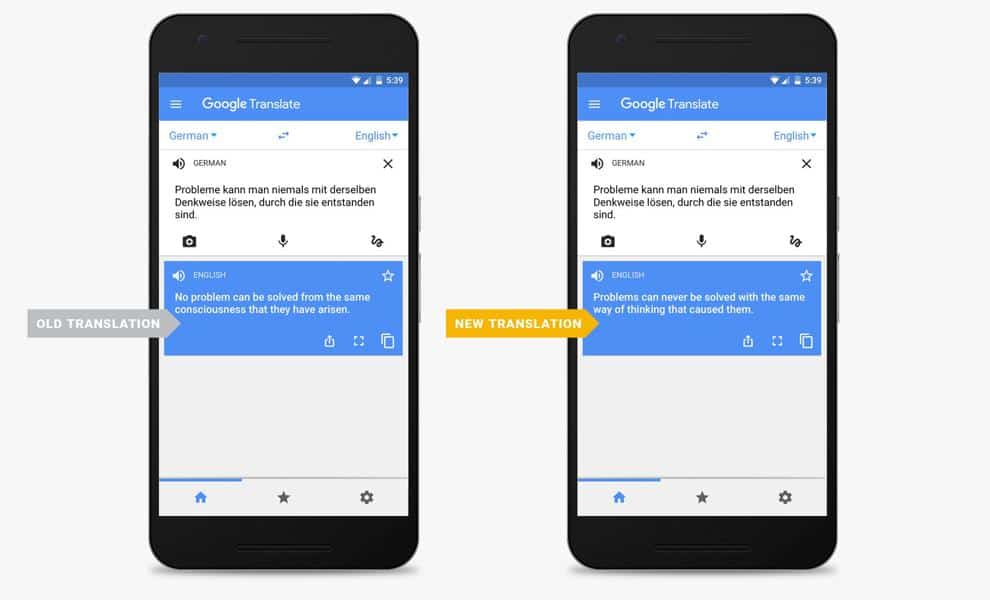

Translation technology has come a long way.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

Picture a world where you can talk to anyone, from anywhere without having to learn a new language. That’s just what companies like Google are promising users with the new Pixel Buds.

The new wireless headphones were revealed earlier this month at an event in San Francisco, California, on the same day that the Google Pixel 2 was revealed. By now you’re probably thinking Google’s new wireless headphones sound a lot like the Apple’s Airpods, and you’re right. The two headphones without a doubt have a lot in common. For example, they both cost $159.00, they’re both wireless devices, and they’re both highly sought after by consumers.

Although there are a lot of similarities between Apple’s Airpods and Google’s Buds, there is one major difference. During the launch of their new headphones, Google announced that their headphones have the ability to translate different languages in real-time; 40, to be exact, according to the Kansas City Star.

With the advancement of these technologies, it’s important to ask ourselves if it’s still necessary for us to make an effort to learn a new language. In other words, is technological advancement going to disable us from needing to have common knowledge to communicate with others in their native language? As of right now, it’s hard to say, but one thing is for sure: Communicating with someone without having any understanding of their cultural beliefs, ideas, and language can bring the user some unwanted attention. Depending on where you are and who you’re speaking with.

Aside from the possibility of communicating with millions of people around the globe, this technological translator, also known as natural language processing products, could also find its way into the business industry. However, not all industries are rushing to make the switch, and for good reason.

To Make the Switch or Not to Make the Switch: That is the Question

Within the healthcare industry, for instance, it’s important for doctors, nurses, and other healthcare officials to be able to diagnose patient injuries. That’s why good translation is now a priority among health services. If health care officials use an electronic translator during an emergency, it could mean the difference between life and death for the patient.

Another thing to think about is what if the patient is unconscious, and the only person with them doesn’t speak the native language? How can doctors assess the injuries and try to move forward saving this person’s life? The answer is, they can’t. This is why hospitals like JFK Medical Center encourage patients to use available interpretation services for confidential medical discussions. Interpretation services include face-to-face interaction with someone who can translate between languages and let healthcare officials know when the injury happened, how it happened, and what has been done leading up to this point.

The point is, technology has changed the way we learn languages. With this constant communication right at our fingertips, people can always be heard, and never have to let their language barrier hinder them.

As we continually enter a globalized economy, the push for these types of technologies will continue to rise. Just remember, although it’s useful, machine translation should not be used as a primary way to learn a new language. Instead, it should be used a stepping stone (or blueprint) to gain further insight not only into the language but the people as well. This makes the process of learning a new language feel less redundant and encourages you to keep on going throughout your learning process.

Thanks for the read! Did I miss anything vital? What are some other pros and cons about machine translation apps and/or tools? Feel free to leave a comment below.