Facebook will now give you more info about the COVID-19 articles you share

This won’t stop people from sharing bad information.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

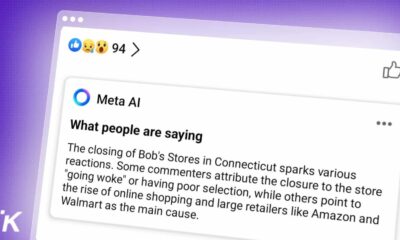

Recently, Facebook has been intensely active in regulating the spread of potentially harmful Covid-19 fake news and misinformation. To that end, they introduced a new notification screen that will give users deeper insights about the article, its source, when it was first shared, and so on.

According to Facebook, they want people to understand the source and the content’s relevance before hitting that share button. Then, if needed, point them toward their COVID-19 Information Center for reliable and current information. This initiative comes as part of a prior effort to combat the spread of older links that were regularly introduced as new ones to misinform and mislead current happenings. Recently, Facebook deleted a whopping seven million posts spreading false Covid-19 information.

Since the start of the Pandemic, Facebook has been trying to deal with the spread of coronavirus conspiracy theories. Their efforts are not just inclined to stop their spread, but also towards replacing them with vetted COVID-19 information from reliable medical authorities.

Later in the pandemic, they also had to deal with anti-mask groups that started spreading false news and videos about the “dangers of wearing masks.” Facebook started banning these groups one by one. They started placing anti-misinformation content into the feeds of Facebook users who have interacted with fake coronavirus news and misinformation.

During May, Facebook’s headaches grew even bigger when the Plandemic video went viral. Even if that wasn’t enough, one of its trusted partners, Breitbart, presented a video full of misinformation last month. The video was about COVID-19 measures to stop its spread, as well as its cures. Despite their efforts to remove the video from their platform, it somehow managed to regularly pop-up and is still live. In a later statement, they said they would investigate why the video was live for that long.

They have also stated that users won’t be notified when sharing content from global health authorities and government health organizations, which are considered credible. The World Health Organization is regarded as one such source of information. On the other hand, Facebook users will always be notified when they try to share out-of-date or fake information from untrustworthy sources.

Will this simple pop-up affect what users share on the social media platform? Let us know what you think down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- Facebook abandoned drilling equipment on the ocean floor and has no plans of cleaning it up

- Facebook thinks I’m in a poly relationship with my partner and her mother

- Instagram was storing your deleted messages and images on its server for as long as a year

- Instagram has officially launched its TikTok clone, Reels, in over 50 countries