WhatsApp’s CEO is definitely not a fan of Apple’s new controversial photo-scanning feature

Apple’s upcoming feature that scans iCloud photos for child abuse is drawing fire from the tech community.

Just a heads up, if you buy something through our links, we may get a small share of the sale. It’s one of the ways we keep the lights on here. Click here for more.

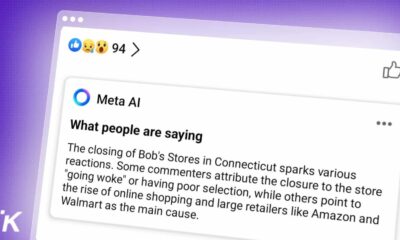

Apple’s plans to scan iPhones for child sexual abuse material (CSAM) are drawing fire from the tech community. The latest rant against the potential for abuse of the tool comes from Facebook’s head of WhatsApp, Will Cathcart. his overall take? He’s “concerned.”

See, while Apple’s tool is focused on scanning for CSAM, it could just as easily scan for any content that Apple or anyone else decides to. That’s the crux of Cathcart’s reasoning, in that “Apple built and operated surveillance system that could very easily be used to scan private content for anything they or a government decides it wants to control.”

An Apple spokesperson told Engadget that Apple disputes “Cathcart’s characterization of the software, noting that users can choose to disable iCloud Photos.”

They also said the system is trained on “known” images provided by the National Center for Missing and Exploited Children (NCMEC) and other organizations, and that it can’t be tweaked regionally as it’s “baked into iOS.”

Why should we take Cathcart’s words as troubling? Well, as head of WhatsApp, he’s already involved in anti-CSAM measures. WhatsApp took a different approach, making it easier to report questionable images, without breaking end-to-end encryption. The service reported over 400,000 cases to NCMEC last year, from user reports.

Then again, Facebook might just be taking advantage of any time it can roast Apple on its own privacy record. I mean, Apple’s CEO Tim Cook has been roasting Facebook for years now…

Have any thoughts on this? Let us know down below in the comments or carry the discussion over to our Twitter or Facebook.

Editors’ Recommendations:

- The Apple Watch has saved another life after a man fell in a bathroom

- Washington, D.C. teens are getting AirPods for vaccinations while the rest of us get lousy stickers

- WhatsApp is rolling out disappearing photos and videos and it’s not just for nudes we swear

- A police surveillance database could be storing your Facebook selfies if your profile is public